ROAS looks simple on the surface, until it suddenly drops. And when it does, uncertainty kicks in fast.

According to the CMO Council, 92% of marketers struggle to accurately attribute ROI across multiple channels. At the same time, marketing managers spend 8 to 12 hours every week just reconciling performance data from Meta, Google, and marketplaces.

So when ROAS dips on one platform but stays flat or even improves elsewhere, confusion compounds. Marketplaces add even more noise to the picture.

The problem is rarely the number alone. It is what follows. Teams hesitate before they act. Do you pause campaigns to limit risk, shift budgets to safer channels, or wait for more data that may or may not clarify the picture?

This blog breaks down how to interpret ROAS drops without freezing your next move. Let’s dive right in!

Inside any single ad platform, ROAS feels reassuringly simple. One dashboard. One number. One attribution model that the team knows well. Meta, Google, and marketplaces all present ROAS in a way that feels decisive because it is internally consistent.

You see spend, revenue, and a clean ratio tying the two together. That familiarity builds trust, especially when decisions need to be made quickly.

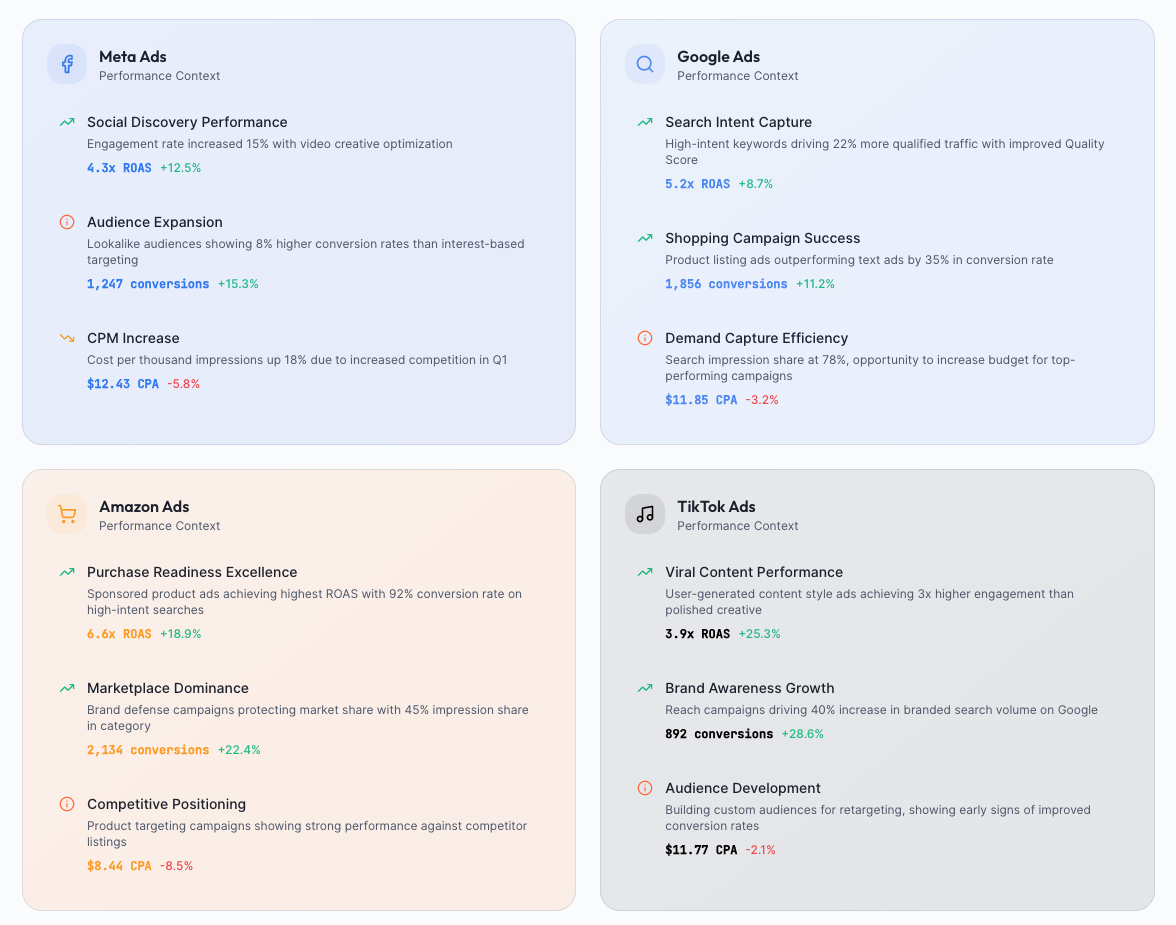

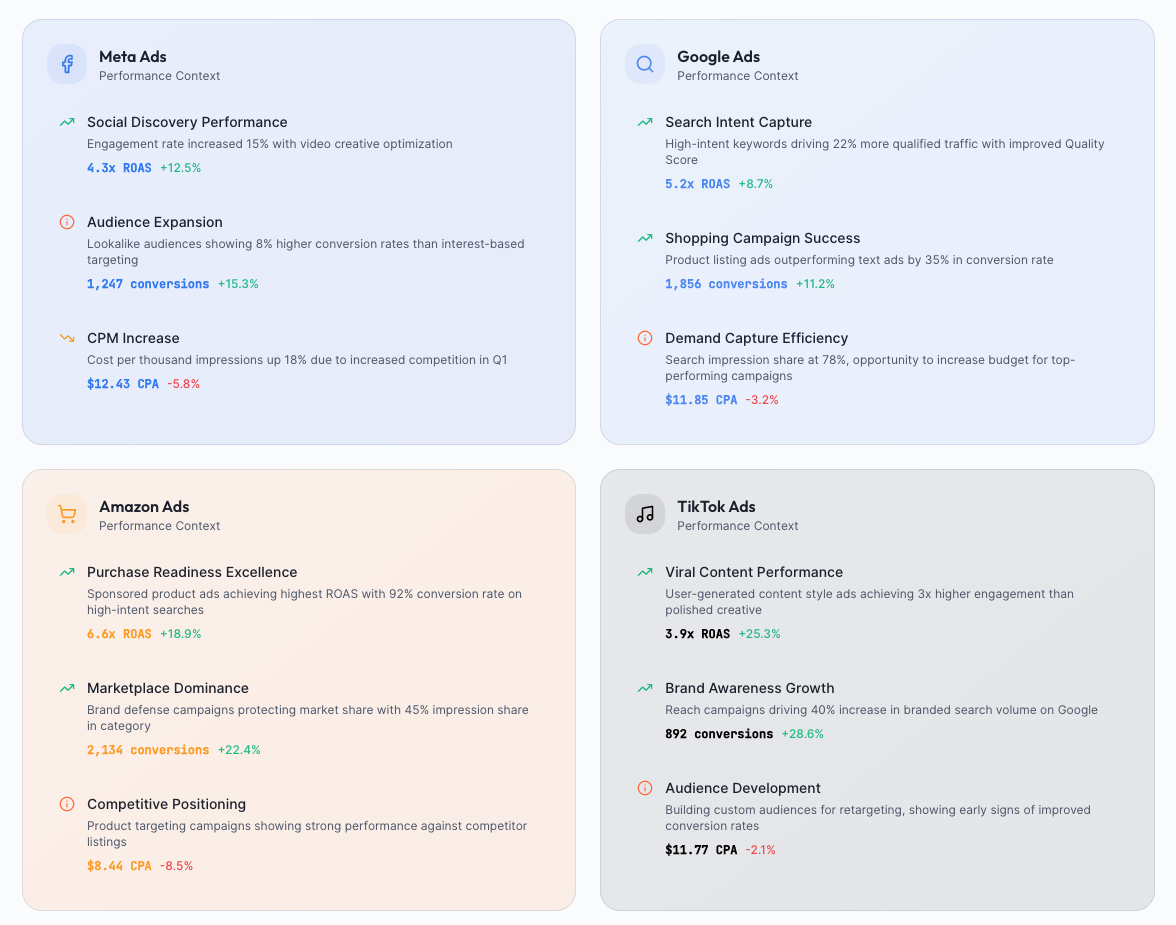

The moment you compare ROAS across platforms, the logic starts to wobble. Each channel measures success within its own context:

So when Meta reports a 3.2 ROAS and Google shows 4.5, they are not contradicting each other. They are describing different moments in the same customer journey.

ROAS works well inside platforms because it is designed to justify spend within that ecosystem, not to explain total business impact. Once journeys span multiple touchpoints, the story fragments.

In fact, 67% of conversions now involve two or more channels. When viewed together, platform ROAS stops being a final answer and becomes a partial signal that needs broader context to drive confident decisions.

ROAS drops rarely mean one clear thing. More often, they point in different directions at the same time. That is why reacting too quickly can create more damage than the drop itself.

Before changing budgets or pausing campaigns, it helps to understand what forces are actually pulling the number down.

ROAS does not fall on a level playing field. Meta typically looks at a 7-day click window, Google often extends to 30 days, and Amazon usually sits around 14 days. When demand slows or purchase cycles stretch, some platforms stop seeing credit sooner than others. The result is a ROAS drop that appears isolated, even though conversions still happen later elsewhere.

Customer journeys are no longer linear. A shopper may discover your brand on Meta, search on Google a day later, and finally convert on a marketplace. Each platform claims its own slice of that journey, but none sees the full picture. When overlap increases, ROAS can fall in one channel simply because another captured the final click.

Strong organic performance can quietly distort paid results. If customers start going directly to Amazon or your site, paid ads may look weaker even while they are still driving awareness upstream. ROAS drops here do not signal poor performance. They signal that demand is being fulfilled elsewhere.

Sometimes ROAS dips have little to do with media efficiency. Pricing changes can shift buying behavior overnight. SKU availability issues can break the conversion path mid-journey. Algorithm updates may change traffic quality. Competitors can intensify bidding in one channel but not others. Add seasonality on top, where Q4 ROAS often runs 25 to 40% higher than Q1, and year-over-year comparisons need far more context than a single number can provide.

When ROAS drops, marketing managers are looking for an explanation they can trust. One that connects the dots across channels, teams, and timelines. The number itself is only the trigger. The real work begins after.

The core question is not which platform underperformed. It is what changed in the customer journey to cause this shift. ROAS is an outcome metric. It tells you what happened, but it cannot explain why it happened or what action will fix it. Without context and root cause analysis, reacting to ROAS alone often leads to decisions that feel urgent but lack confidence.

To move from reaction to reasoning, managers need integrated signals across multiple layers. Looking at just one dimension creates blind spots.

When these layers live in separate tools, explanations are incomplete. A ROAS drop may look like a media problem in one dashboard, a product issue in another, and a demand shift in a third. Without a unified view, managers are left stitching together narratives under pressure.

Behind every ROAS drop is a higher-stakes question. Can this change be clearly explained to a CMO? And can the next budget move be justified with evidence rather than instinct? Until those answers are solid, ROAS remains a source of hesitation instead of clarity.

Most of the effort to interpret ROAS happens before any insight appears, and that effort rarely shows up in reports or dashboards.

Marketing managers switch between 10-12 tools just to validate a single performance question. Meta Ads Manager, Google Ads, Shopify, Amazon Seller Central, and GA4 all tell slightly different stories. Teams cross-check revenue attribution, question tracking accuracy, and mentally map customer journeys to understand where conversions actually happened. Instead of analysis, the work becomes reconciliation.

This constant validation delays action. By the time inconsistencies are resolved, the market has already moved. Algorithms adjust, competitors change bids, and demand patterns shift. Insights arrive late, turning what should be proactive decisions into reactive ones.

The mental overhead adds up quickly. Marketing managers toggle between platforms 30 to 50 times a day just to monitor performance. That level of context switching fragments focus and increases hesitation. Waiting feels safer than acting on partial or conflicting signals, especially when budgets are under scrutiny.

Every day of inaction on a declining channel quietly wastes two to five percent of monthly ad spend. These losses compound fast. Meanwhile, time spent reconciling data is time not spent improving creatives, testing audiences, or scaling what works. When interpretation overwhelms execution, ROAS becomes a productivity tax rather than a decision-making tool.

ROAS drops are inevitable. What creates risk is not the decline itself, but reacting without understanding what changed underneath.

When ROAS is treated as a trigger, decisions become rushed and defensive. When it is treated as a signal, it invites explanation, context, and confidence. Better interpretation leads to better decisions, not because teams move faster, but because they move with clarity.

If recurring ROAS drops are slowing decisions, Graas’ Hoppr helps marketing managers interpret performance across channels without the manual guesswork. Extract acts as the system of record, cleaning, standardizing, and modeling raw data into unified commerce tables. Hoppr becomes the system of intelligence, where teams ask questions, get clear answers, and take informed action.

ROAS looks simple on the surface, until it suddenly drops. And when it does, uncertainty kicks in fast.

According to the CMO Council, 92% of marketers struggle to accurately attribute ROI across multiple channels. At the same time, marketing managers spend 8 to 12 hours every week just reconciling performance data from Meta, Google, and marketplaces.

So when ROAS dips on one platform but stays flat or even improves elsewhere, confusion compounds. Marketplaces add even more noise to the picture.

The problem is rarely the number alone. It is what follows. Teams hesitate before they act. Do you pause campaigns to limit risk, shift budgets to safer channels, or wait for more data that may or may not clarify the picture?

This blog breaks down how to interpret ROAS drops without freezing your next move. Let’s dive right in!

Inside any single ad platform, ROAS feels reassuringly simple. One dashboard. One number. One attribution model that the team knows well. Meta, Google, and marketplaces all present ROAS in a way that feels decisive because it is internally consistent.

You see spend, revenue, and a clean ratio tying the two together. That familiarity builds trust, especially when decisions need to be made quickly.

The moment you compare ROAS across platforms, the logic starts to wobble. Each channel measures success within its own context:

So when Meta reports a 3.2 ROAS and Google shows 4.5, they are not contradicting each other. They are describing different moments in the same customer journey.

ROAS works well inside platforms because it is designed to justify spend within that ecosystem, not to explain total business impact. Once journeys span multiple touchpoints, the story fragments.

In fact, 67% of conversions now involve two or more channels. When viewed together, platform ROAS stops being a final answer and becomes a partial signal that needs broader context to drive confident decisions.

ROAS drops rarely mean one clear thing. More often, they point in different directions at the same time. That is why reacting too quickly can create more damage than the drop itself.

Before changing budgets or pausing campaigns, it helps to understand what forces are actually pulling the number down.

ROAS does not fall on a level playing field. Meta typically looks at a 7-day click window, Google often extends to 30 days, and Amazon usually sits around 14 days. When demand slows or purchase cycles stretch, some platforms stop seeing credit sooner than others. The result is a ROAS drop that appears isolated, even though conversions still happen later elsewhere.

Customer journeys are no longer linear. A shopper may discover your brand on Meta, search on Google a day later, and finally convert on a marketplace. Each platform claims its own slice of that journey, but none sees the full picture. When overlap increases, ROAS can fall in one channel simply because another captured the final click.

Strong organic performance can quietly distort paid results. If customers start going directly to Amazon or your site, paid ads may look weaker even while they are still driving awareness upstream. ROAS drops here do not signal poor performance. They signal that demand is being fulfilled elsewhere.

Sometimes ROAS dips have little to do with media efficiency. Pricing changes can shift buying behavior overnight. SKU availability issues can break the conversion path mid-journey. Algorithm updates may change traffic quality. Competitors can intensify bidding in one channel but not others. Add seasonality on top, where Q4 ROAS often runs 25 to 40% higher than Q1, and year-over-year comparisons need far more context than a single number can provide.

When ROAS drops, marketing managers are looking for an explanation they can trust. One that connects the dots across channels, teams, and timelines. The number itself is only the trigger. The real work begins after.

The core question is not which platform underperformed. It is what changed in the customer journey to cause this shift. ROAS is an outcome metric. It tells you what happened, but it cannot explain why it happened or what action will fix it. Without context and root cause analysis, reacting to ROAS alone often leads to decisions that feel urgent but lack confidence.

To move from reaction to reasoning, managers need integrated signals across multiple layers. Looking at just one dimension creates blind spots.

When these layers live in separate tools, explanations are incomplete. A ROAS drop may look like a media problem in one dashboard, a product issue in another, and a demand shift in a third. Without a unified view, managers are left stitching together narratives under pressure.

Behind every ROAS drop is a higher-stakes question. Can this change be clearly explained to a CMO? And can the next budget move be justified with evidence rather than instinct? Until those answers are solid, ROAS remains a source of hesitation instead of clarity.

Most of the effort to interpret ROAS happens before any insight appears, and that effort rarely shows up in reports or dashboards.

Marketing managers switch between 10-12 tools just to validate a single performance question. Meta Ads Manager, Google Ads, Shopify, Amazon Seller Central, and GA4 all tell slightly different stories. Teams cross-check revenue attribution, question tracking accuracy, and mentally map customer journeys to understand where conversions actually happened. Instead of analysis, the work becomes reconciliation.

This constant validation delays action. By the time inconsistencies are resolved, the market has already moved. Algorithms adjust, competitors change bids, and demand patterns shift. Insights arrive late, turning what should be proactive decisions into reactive ones.

The mental overhead adds up quickly. Marketing managers toggle between platforms 30 to 50 times a day just to monitor performance. That level of context switching fragments focus and increases hesitation. Waiting feels safer than acting on partial or conflicting signals, especially when budgets are under scrutiny.

Every day of inaction on a declining channel quietly wastes two to five percent of monthly ad spend. These losses compound fast. Meanwhile, time spent reconciling data is time not spent improving creatives, testing audiences, or scaling what works. When interpretation overwhelms execution, ROAS becomes a productivity tax rather than a decision-making tool.

ROAS drops are inevitable. What creates risk is not the decline itself, but reacting without understanding what changed underneath.

When ROAS is treated as a trigger, decisions become rushed and defensive. When it is treated as a signal, it invites explanation, context, and confidence. Better interpretation leads to better decisions, not because teams move faster, but because they move with clarity.

If recurring ROAS drops are slowing decisions, Graas’ Hoppr helps marketing managers interpret performance across channels without the manual guesswork. Extract acts as the system of record, cleaning, standardizing, and modeling raw data into unified commerce tables. Hoppr becomes the system of intelligence, where teams ask questions, get clear answers, and take informed action.